How to Implement AI in Business: From Pilot to Scalable Impact

Many companies experiment with AI but struggle to see real results. This guide explains how to implement AI in business, moving from pilots to scalable, measurable impact.

Jan 28, 2026

In many companies, AI initiatives don’t fail loudly. They stall quietly.

Pilots get approved, demos look promising, teams experiment, and months later leadership is still asking the same question: is this actually changing how the business operates?

Implementing AI in business today is less about access to technology and more about embedding it into real processes, decisions, and accountability. This article focuses on what that looks like in practice and how to move from pilots to sustained impact.

What “implementing AI in business” really means today

In practice, “AI implementation” still means building a proof of concept. A model that predicts something. A chatbot that answers a subset of questions. A dashboard with more advanced analytics.

Those efforts are not wrong, but they are not implementation either.

AI moves beyond experimentation only when three things happen at the same time:

- The AI output is embedded into a business workflow people already use to make decisions or take action.

- There is clear ownership over the outcome the AI influences.

- Success is measured through business KPIs, not model performance alone.

The same tool can be either a pilot or a real implementation, depending on how it is used. What matters is whether it changes a workflow, has clear ownership, and is measured through business outcomes.

This distinction matters in practice.

A pilot answers “can this work?”

An operational AI solution answers “is this changing how we work and what results we get?”

For example, predicting customer churn with a model is a pilot. Integrating that prediction into sales or retention workflows, assigning ownership to a team, and measuring churn reduction over time is implementation.

When AI works well, it often feels unremarkable. Decisions get faster. Manual effort goes down. Exceptions become clearer. Teams trust the output because it fits naturally into how they already operate.

The challenge is crossing that line into production, where AI becomes part of daily operations instead of a standalone initiative.

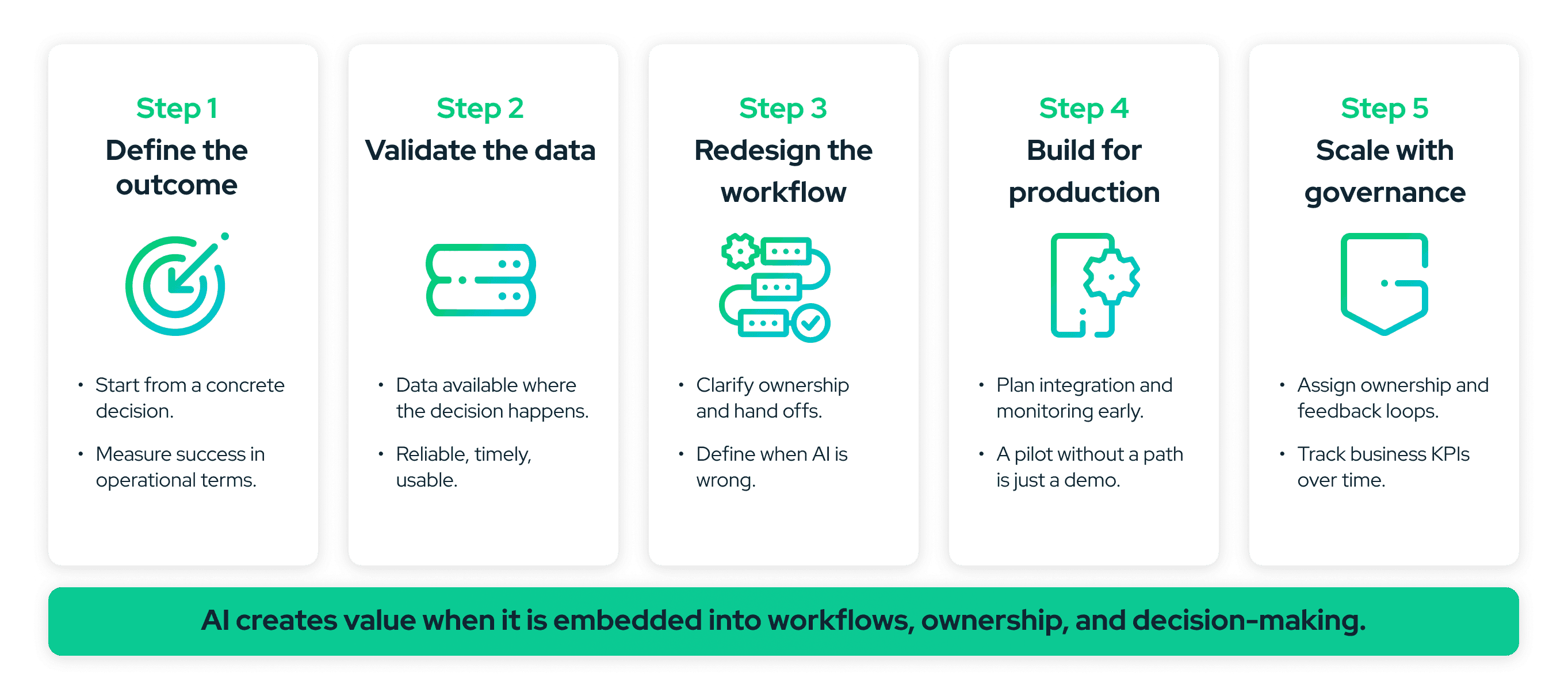

How to implement AI in business step by step

There is no universal formula, but there are consistent patterns that work. Below is the practical path we see delivering results most often.

Step 1: Start with a business outcome, not a model

Effective AI initiatives begin with a concrete business outcome tied to how the organization runs today.

Examples include:

- Reducing manual review time in a specific process.

- Improving response times in customer support.

- Increasing approval rates without increasing risk.

- Improving forecasting accuracy for a recurring decision.

At this stage, the question is not which model to use, but which decision or process needs to improve.

A simple test helps here: if you cannot explain the goal without mentioning AI, the goal is probably not clear enough yet.

This step tends to stall when teams frame success in technical terms instead of operational ones. When that happens, optimization happens in isolation, without changing anything downstream.

Step 2: Check data maturity where the work actually happens

Data maturity is often discussed at a high level, but implementation lives in very concrete places.

The real questions are:

- Is the data accessible where the decision is made?

- Is it reliable enough to influence action?

- Is it updated at the cadence the process requires?

Many AI initiatives struggle because data exists in theory but is fragmented across systems, inconsistently defined, or disconnected from operational workflows.

In our work with Auto Approve, a U.S.-based company specializing in auto loan refinancing, early AI initiatives revealed that while data was available, it was not consistently usable at the decision point. Narrowing scope and improving data quality around a single approval workflow unlocked progress that broader efforts could not.

This step often surfaces uncomfortable truths: manual workarounds, shadow spreadsheets, inconsistent definitions. That is not a reason to stop. It is a signal to focus on where data quality can realistically support an AI-driven decision.

If you want a deeper look at how these gaps typically show up, we break them down in more detail in our AI readiness perspective.

Step 3: Redesign the workflow before adding AI

AI rarely fixes broken processes. If a workflow is unclear, slow, or overloaded with exceptions, adding AI on top usually amplifies the problem.

Before introducing AI, teams should be able to answer:

- Where does this decision live today?

- Who acts on it?

- What happens if the AI is wrong?

This is where implementation becomes a design exercise, not a modeling one.

In projects with Esquire Depositions, a U.S.-based leader in legal deposition services and part of a private equity portfolio, the biggest gains came not from model sophistication, but from clarifying decision handoffs and reducing manual steps before introducing AI-driven automation.

Only after the workflow makes sense does AI become a force multiplier instead of a source of friction.

Step 4: Build for production early

One reason many pilots never scale is that production concerns are postponed. Integration, security, monitoring, and performance are treated as “later problems.”

In practice, those constraints determine whether an AI initiative survives.

Production-ready implementation considers early on:

- How the AI integrates with existing systems.

- How outputs are monitored and validated.

- How failures are detected and handled.

- How performance is measured over time.

This does not mean overengineering from day one. It means acknowledging that a successful pilot without a clear production path is still just a demo.

Step 5: Scale with governance and feedback loops

Once an AI solution is live, implementation is not finished. Models drift. Data changes. Business priorities evolve.

Sustained impact requires:

- Clear ownership for AI-influenced decisions.

- Governance around data and model updates.

- Feedback loops between users and the system.

- Regular review of business KPIs alongside AI outputs.

In our collaboration with Hanwha Vision, a global video surveillance and security technology company, scaling AI-driven object recognition across multiple operational contexts required not just technical refinement, but ongoing feedback between operators, data teams, and business owners to keep the system aligned with real-world conditions.

At this stage, AI becomes part of the operating model, not a special project.

Patterns that separate pilots from real impact

Looking across AI initiatives that move beyond experimentation, a few patterns consistently show up.

Signals that AI is scaling successfully

When AI is moving beyond experimentation, its impact starts to show up in everyday operations. These signals point to initiatives that are influencing real decisions and outcomes over time, rather than living on the side as isolated experiments.

- There is a clear owner accountable for outcomes over time.

- AI outputs live inside existing tools and workflows.

- Success is reviewed through business KPIs, not isolated metrics.

- Teams expect iteration and adjust models as conditions change.

Early signals an initiative is starting to drift

By contrast, initiatives that begin to lose momentum rarely fail all at once. The early signs usually emerge quietly, through small disconnects between insight, action, and accountability.

- AI insights exist, but no one acts on them.

- Teams debate model accuracy without revisiting the decision it supports.

- Ownership shifts or disappears after launch.

- Metrics are reviewed once, then forgotten.

These signals usually appear gradually. Catching them early often determines whether an initiative compounds value or quietly stalls.

None of these issues are fundamentally technical. They are operational and organizational, which is why AI implementation is ultimately a business discipline, not just a data science one.

From experimentation to execution

The shift from AI pilots to real impact does not happen all at once. It happens when organizations stop asking “can we build this?” and start asking “is this changing how we operate?”

Answering that question clearly is often the difference between experimentation that feels busy and implementation that actually delivers value.

If your teams are already experimenting with AI but it is still unclear how much of that effort is translating into real operational change, taking a closer look at our AI & Data Strategy Consulting Services can help frame where to focus and how to move forward.

Jan 28, 2026